Akka.NET with F# - Getting functional with Reactive systems

Early last month I had the pleasure of presenting on Akka.NET to the F# Sydney meetup user group. The presentation is largely an introduction to agent-based programming and the Actor model of computation with Akka.NET from a functional programming perspective, using the Akka.NET F# API.

The slides and code can be found up on Github

Optimistic Concurrency with Elasticsearch and NEST

In my last post I gave an example of how to use the bulk api to index many documents at a time into Elasticsearch. Once all of the documents have been indexed, it is very likely that we'll want to update individual documents within the index; In this post, we'll look at how to do this and some of the features available to manage multiple concurrent update requests to a given document.

Geospatial search with Elasticsearch and NEST

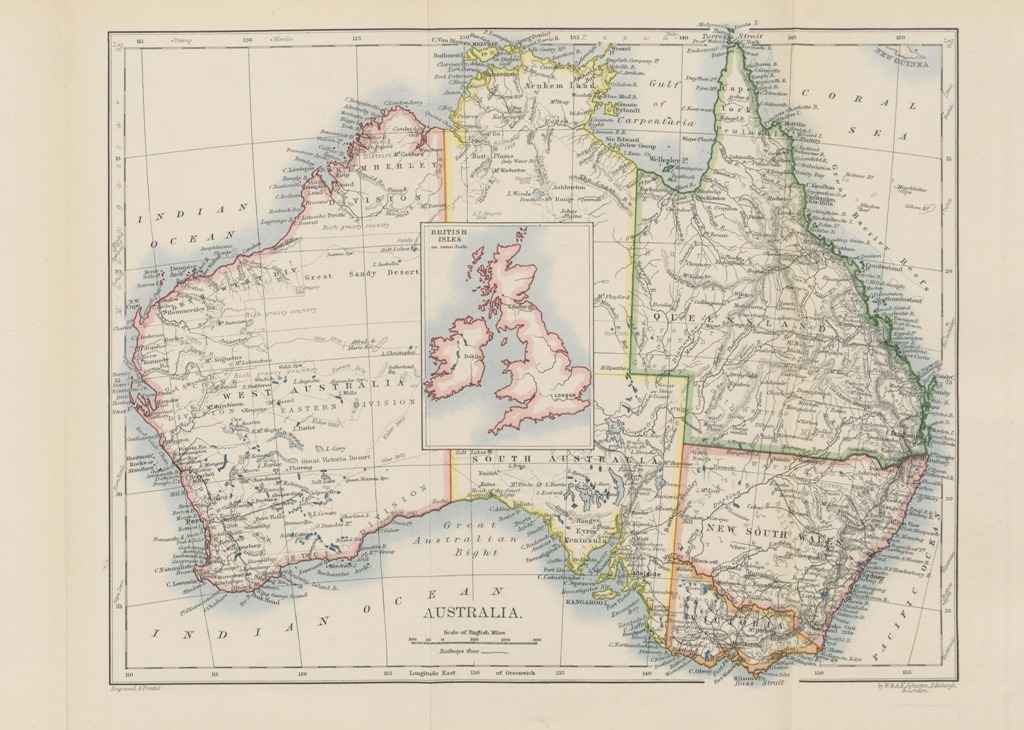

In this blog post I’m going to show you how to get started with geospatial search with Elasticsearch, using the official and fantastic .NET client for Elasticsearch, NEST. An example like this is best served with real data, so given this post was written from Australia, we’ll use the State Suburbs (SSC) from 2006 provided by the Australian Bureau of Statistics as the data of interest; it's provided in ESRI Shapefile format and contains a collection of all the Australian Suburbs, each with a name, code and geometry; We’ll need to extract each suburb from the Shapefile and serialize them to a format that can be persisted to Elasticsearch and so that we can query them.

TL;DR

I’ve put together a demo application to illustrate geospatial search using Elasticsearch and NEST..

ReSharper + SemanticMerge = Streamlined Development

I wanted to share a setup that has been working well for me for some time in keeping the layout of C# code consistent, easier to maintain and smoother when it comes to merging changes into git. It leverages ReSharper and SemanticMerge, two really awesome tools that allow you to remain focused on delivering features with minimum fuss.

With this setup, a simple keyboard shortcut restructures a C# code file according to defined settings, performing such actions as reordering members according to type, accessibility and name, removing regions, sorting Using directives and updating file headers. When it comes to merging these changes into source control, we can merge with confidence as SemanticMerge parses the C# file to determine changes at the class structural level, meaning we can happily reorder members ‘til our hearts content and let SemanticMerge deal with the fallout of determining the real changes. Enough of the hyperbole, let’s get set up!

Running MassTransit within a Topshelf Windows Service

Whenever I have a need to write a Windows Service, Topshelf is pretty much the first nuget package that I install to aid in the task. In a nutshell, Topshelf is a framework that makes writing Windows Services easier; you write a standard console application, add Topshelf to the mix and you now have an application that can be run as a console application whilst developing and debugging, and installed as a Windows Service when the need to deploy arises. It's a pretty frictionless experience, but there can be some a gotcha when using Topshelf in conjunction with MassTransit and Dependency Injection. I've experienced this a few times so thought I'd write it down here for next time!

Capturing emails in Acceptance tests with Specflow

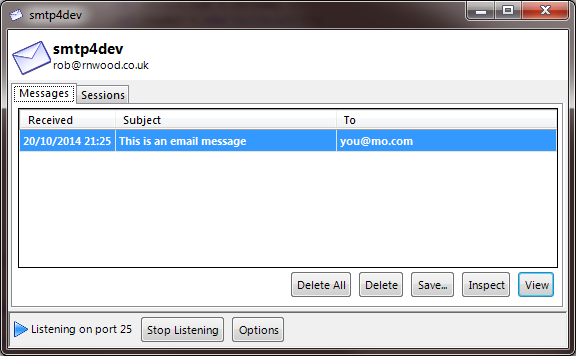

Pretty much every web application out there nowadays needs to send emails, whether it be for registration, password reset or some other bespoke functionality. When you’re working in your own dev environment and building out features, tools like smtp4dev work great for capturing emails sent to localhost; to use, simply add the following to web.config

<system.net>

<mailSettings>

<smtp deliveryMethod="Network">

<network host="localhost" port="25" defaultCredentials="true" />

</smtp>

</mailSettings>

</system.net>start smtp4dev.exe

and you can start capturing emails sent whilst developing. You can use web config transforms or slow cheetah to replace the mail settings for different build configurations too. But what about acceptance tests; how do you handle emails sent during acceptance test runs?

Using WSFederationAuthenticationModule with Classic ASP

I've recently been working with a client that is updating an existing Classic ASP application to ASP.NET MVC 5. As part of the upgrade, some of the systems currently hosted on an internal web server are being moved over to Windows Azure and the existing Windows Authentication in place, over to single sign-on (SSO) with Windows Azure Active Directory and Office 365, to leverage a cloud-based identity solution and minimise on-premises infrastructure.

Nuget Package for Managing Scripts in ASP.NET MVC 4

As per the comments on my previous post about Managing Scripts for Razor Partial Views and Templates in ASP.NET MVC, I've created a Nuget package for the HtmlHelpers used. Go take a look and leave a comment if you find them useful; I plan to add additional helpers to the package over time for general functionality that is useful in any ASP.NET MVC application.

Web API – Binding Complex types from the URI by default for HTTP GET requests

The model binding implementation in ASP.NET Web Api shares some similarities to the model binding you find in ASP.NET MVC and at the same time has some striking differences. For a more detailed discussion on web api parameter binding, check out Mike Stall’s excellent post on the topic. The topic of today’s post is how we define our own convention for parameter binding complex types when the request is a HTTP GET request.

CSV ValueProvider for model binding to collections

TL;DR

I have written a ValueProvider for binding model collections from records in a CSV file. The source code is available on BitBucket.

Model binding in ASP.NET MVC is responsible for binding values to the models that form the parameter arguments to the controller action matched to the route. But where do model binders get these values from? That’s where Value Providers come in; A ValueProvider provides values for model binding and there are ValueProviders for providing values from